From Idea to Production in 41 Days

A case study on building a greenfield SaaS product with AI — one engineer, no hand-written code, $2k AI spend.

The Project

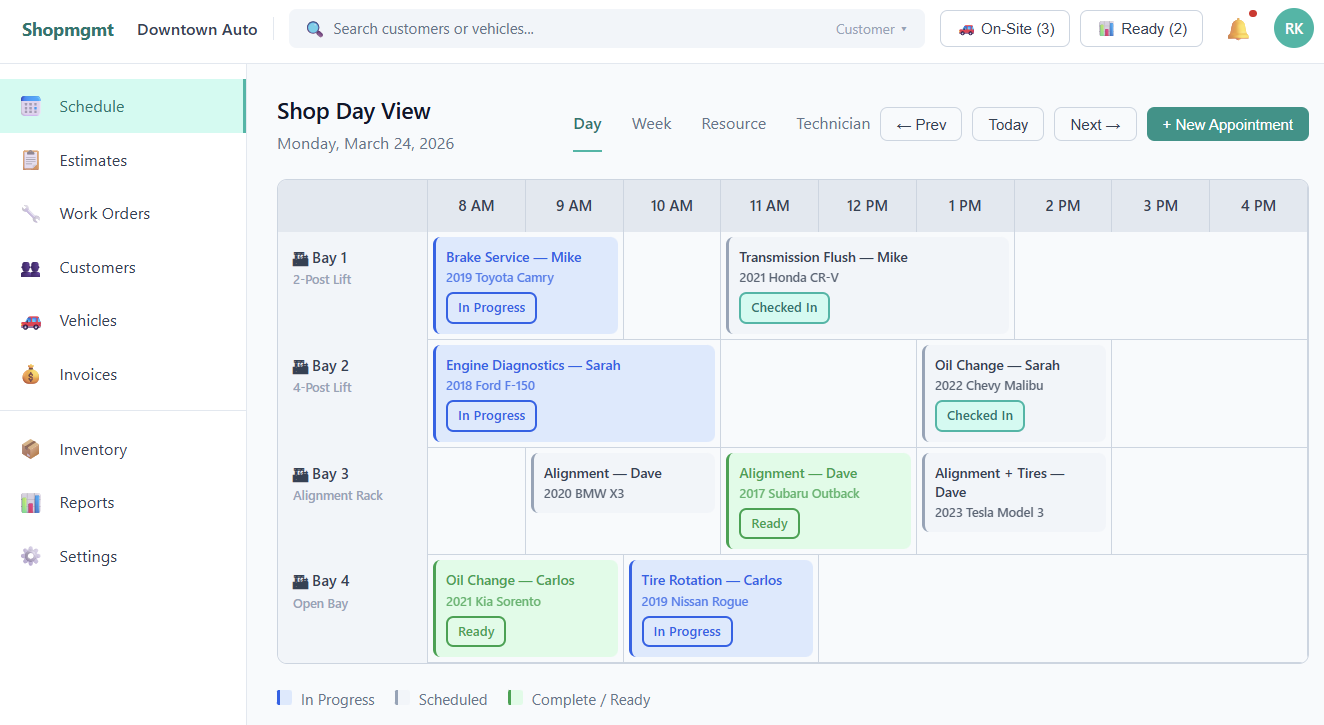

In mid-March 2026, a client came to Senzo with a clear brief: build a multi-location auto repair shop management platform from scratch. ShopMgmt needed scheduling, estimates, work orders, invoicing, technician queues, inventory, customer notifications, QuickBooks sync, role-based access control, and a full AWS production deployment.

Forty-one days later, we demoed it on live production infrastructure.

We didn’t expect to see so much done in a short period of time. This covers most of what our previous system did and more. The more is where ShopMGMT will pay for itself and it is all around operation optimization, by maximizing the usage of our resources and scheduling of our technicians.

— J Imad, Owner, SAV-ON Auto (Cape Cod)

This is better than any of the software we’ve used. Look and feel is very modern, with features that help us optimize our operation.

— Ben Connelly, Owner, Connelly Auto

One engineer. No application code written by hand. Total AI spend: roughly $2,000.

For context: with a good 2-person team today, this scope is 6-8 months of dedicated full-time work.

What Goes Wrong Without a Process

Most teams trying AI-assisted development run into the same problem. They start fast with a chat window, a prompt, working code in minutes. Then two weeks in, the codebase starts reflecting the AI’s shifting opinions rather than any coherent design. Nobody catches the drift because nobody owns the direction. The rework ends up costing more than the original approach would have.

The speed was real. The output just wasn’t.

How We Actually Did It

We didn’t start with code. We didn’t even start with a PRD.

We started with a long, messy list of what the client needed: use cases, workflows, personas, edge cases, constraints. From that, we asked the AI to produce a structured PRD and to push back on every assumption in it. Not “write me a requirements doc” but “here’s what we think we need, now tell us what we’re missing and what doesn’t make sense.”

That step, treating the AI as a challenger rather than a scribe, is what most teams skip.

From the PRD, the process stepped through architecture, then UX specification (wireframes and user journey flows built in dedicated design sessions before any UI code was written), then decomposition into 20 epics and 108 user stories. Each story was a complete brief: acceptance criteria, technical tasks, dependencies, test requirements. The AI implemented one story at a time, with the governing documents as its constraint.

The engineer’s job through all of this was steering, not typing. Reviewing output. Catching drift. Making judgment calls. Deciding what to defer.

And things did get deferred. QuickBooks integration was pushed post-v1. The staging environment was dropped mid-project when the cost didn’t justify the current scale. The estimate approval flow was adjusted after we saw how it worked in practice. The artifacts evolved as implementation revealed new information — the AI was always working against the current spec, not the original one.

What Got Built

In 41 days of part-time work (two focused days at the start, then a few hours most days), ShopMgmt shipped a complete product. A few of the harder architectural decisions that made it through:

- Multi-tenant data isolation enforced at the PostgreSQL layer via Row-Level Security, not just in application code

- A constraint-based smart scheduler that checks technician availability, bay conflicts, and parts in under 500ms, with soft warnings the front desk can override

- An event-driven notification system across nine trigger points, with configurable templates per location

- A five-role permission model where technicians see job details but never customer names, contact info, or financial data

136 commits. 85%+ test coverage gated by CI. The demo ran against production, not localhost.

Where the Process Earned Its Keep

AI needed correction throughout. Three patterns came up repeatedly.

Infrastructure scope creep. The first infrastructure pass was technically solid and completely over-built: VPC endpoints, multi-AZ staging, autoscaling configured for load that didn’t exist yet. Reviewed against actual budget and usage constraints, it got stripped down significantly. Infrastructure cost ended up about 70% lower than the initial pass. Without explicit constraints in the governing documents, that kind of over-engineering accumulates quietly.

Preference for the easier path. When two approaches existed, AI reliably gravitated toward the one requiring less context. The correction wasn’t better prompting; it was pointing at the architecture document. “This conflicts with the decision in section 3” works better than arguing in the chat window.

Fix churn. About 31% of commits were fixes: code that passed the immediate task but broke something adjacent, missed an edge case, or violated a convention set earlier. The spec and test suite caught most of it before it mattered. That 31% isn’t a sign the process failed. It’s roughly what AI-assisted development costs in overhead when the feedback loops are working. Without automated tests and clear acceptance criteria, that number gets worse and surfaces later.

What the Process Actually Did to the Work

The structured workflow changed something beyond just organizing the work.

When every planning session has to produce an artifact that governs the next step, you’re forced to make decisions you’d otherwise leave vague. Scope. Technology choices. Acceptance criteria. Each one locked down before it could become an assumption the AI would fill in on its own. The artifacts weren’t just instructions — they were the record of decisions that had already been made.

Those artifacts weren’t a one-time input either. As the codebase grew, the AI needed to be reminded of earlier decisions constantly. Conventions established in story 12 that it had no memory of by story 60. The architecture document and story specs were the repeated correction mechanism throughout, not just planning outputs filed away at the start.

They also forced simplification. Dropping the staging environment wasn’t a failure. It was a deliberate call made because the governing constraints said the cost didn’t justify the scale. That decision was easier to make and defend because it was evaluated against explicit criteria, not gut feel.

Early in the project, the PRD and UX spec were shared with the client to confirm alignment. A few gaps surfaced, requirements that hadn’t made it into the first pass. The fundamentals held, and those gaps were caught before any code existed to rework.

If there’s one thing worth doing differently next time: define an MVP slice first, ship it, then revise scope based on what you learn before committing to full v1. The structured process compressed the timeline significantly, but even 41 days carries assumptions that could have been tested sooner with a tighter first delivery.

The Honest Version of What AI Gives You

Speed, yes. But specifically on implementation, and only when the input is clear. A well-written story turned into working code, tests, and migrations in minutes. Ambiguous input produced output that needed significant correction.

The slowest moments weren’t implementation. They were when the spec was thin or a decision hadn’t been made yet. The AI would produce something, and unsticking it required a human decision rather than a better prompt.

Quality, security, and budget stayed the engineer’s problem throughout. The AI didn’t absorb those responsibilities. Good judgment going in meant better output coming out. The inverse was also true.

The Takeaway

If there’s one thing this project demonstrated, it’s that the investment in structured planning isn’t overhead. It’s where the speed comes from. Not from the AI running fast, but from the AI having something solid to run against.

Start with the business needs. Build the spec through challenge, not dictation. Let the artifacts evolve as you learn. Keep the judgment in human hands.

The typing is the cheap part.

Ready to compress your delivery timeline?

We'll show you where the leverage is.

Book a Stragegy Call